By: Simon Brooke :: 11 May 2026

Rejoice, for even though we do, verily, walk through the valley of the shadow of death, not all the phantasms we see in the valley are as dark as they appear.

I will argue in this essay that

- We don't yet have Artificial General Intelligence;

- We're not currently on a fruitful path towards it;

- It's probably not possible in the lifetime of anyone now living;

- If and when it does happen, it will not be the monster you imagine.

We don't (yet) have Artificial General Intelligence

Artificial general intelligence is the concept of an artificial system capable of developing intelligent and well informed behaviour over at least as broad a range of domains of knowledge as an average human. There's at least a widely held belief — about which I am agnostic — that in order to do so, such a system must necessarily have theory of mind, and sentience.

On Computable Numbers, and AGI

I firmly believe that such a system is possible to build — I believe Turing's paper On Computable Numbers demonstrates this irrefutably.

The paper shows that there is a conceptual machine, named by Turing U but nowadays normally referred to as the 'Universal Turing Machine', which has the property that it can compute all the numbers that it is ever possible to compute with any possible computing machine. So any other computing machine can either compute all the numbers that U can compute — such machines being termed 'Turing complete' — or else it can compute only some subset of those numbers.

Turing generates this proof by showing that it is possible to encode the workings of any possible computing machine as a number, and therefore the application of any such possible machine to any data, as a number. This can be generalised to any expression in any language, because it is trivially possible to encode expressions in language as numbers.

Now, the machine U does not, and cannot, physically exist, because it is conceived as having infinite store, in the form of an infinitely long tape on which to make, and from which to read, marks. Whether this is a difference that makes a difference is not something I'm interested in arguing here, because the human brain does not have infinite store. But we think that the human brain is something that thinks, and we think that in thinking it is deriving conclusions from data. So in so far as it is thinking, it is a computing machine, subject to the logical limitations described in Turing's paper. So it either is Turing complete, or it's less than Turing complete.

We believe that many of the computing machines we have built over the past eighty years have been and are Turing complete, modulo quantity of store; so, in principle, we can derive the algorithm of the human mind and encode it for any of them. We haven't done so yet, because the human brain is complex and subtle. We haven't, because it is analogue, and our computing machines are digital. We haven't, because although we know that the amount of store available to the human brain is finite, we don't know how much of our digital store is equivalent to one neuron's worth of the brain's analogue store.

These are all problems which, with study and thought, can be tackled.

We also assume — I assume — that things like theory of mind, and thus morality; and sentience, and thus aesthetics, are emergent properties of the intelligent working of the human brain. They may not be. I could be wrong on this.

One of the things to be borne in mind here is that the average human brain weighs about 1.4 kilograms and burns about 20 watts of energy. We'll come back to that.

But the point here is, we don't yet have any machine remotely capable of doing the range of cognitive tasks a human being can do, and, despite some wishful or fraudulent claims to the contrary, we're not yet anywhere near it.

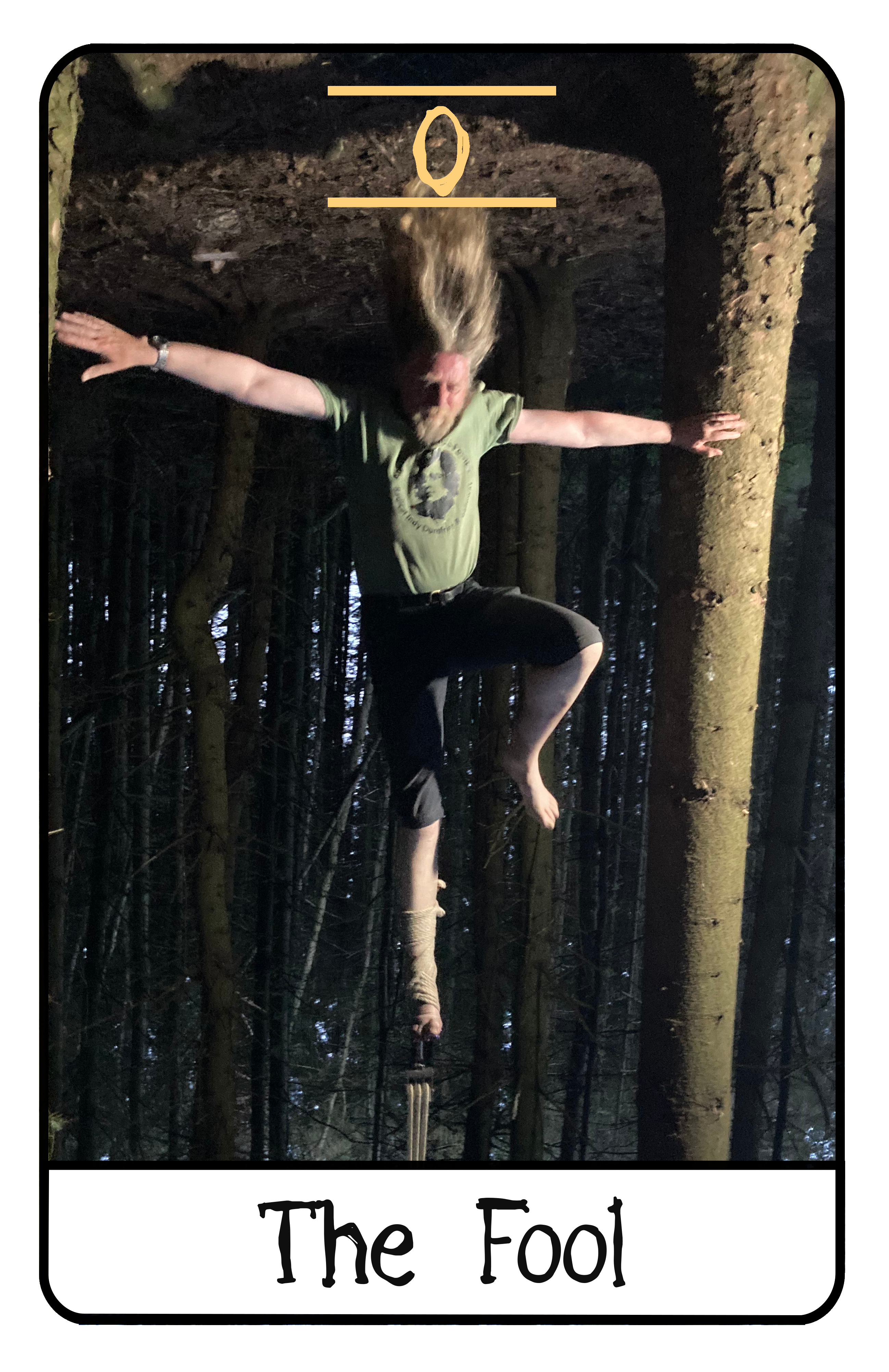

Tarot cards are not on the path towards intelligence

Large Language Models are not very profound technology

There's an awful lot of hype about large language models. Sam Altman thinks they're on the path to artificial general intelligence; that it's just around the corner, along any day now.

I think he's wrong.

Large language models ingest huge amounts of text — all the text that's public on the Internet, and a great deal that isn't — and learn the statistical frequencies of sequences of lexical tokens. 'Lexical tokens,' here, includes what you think of as words, but also punctuation and a few other things. Given a sequence of lexical tokens, they can produce another sequence of tokens which, given their training data, is a likely response to the sequence they were given.

And that is all.

They do not have a semantic layer; they do not have a model of what any sequence of tokens mean. Consequently, they don't have any concept of whether the meaning of the sequence of tokens they have emitted is true or not. They can neither lie nor deceive nor tell the truth nor advise nor even hallucinate: they can only emit sequences of tokens. That is all.

When an experienced tarot card reader offers you a shuffled set of cards, and allows you to draw some, and lays the cards out on the table in front of you, they will then give you a reading which you, listening, will relate to your current situation, and in which you will probably find good advice.

The cards are random.

When a large language model takes your prompt, it will return a response, which you, reading, will relate to the question you asked, and in which you, probably, will find some sort of answer.

The tokens are... well, no, they're not quite random. Not quite. They're probable. They are probable based on the training set. But they don't have meaning, because meaning is imparted by an intelligent actor, and there isn't one here.

The answer, like the advice, is your own creation.

Large Language Models are probably not going to get any better

Large language models over the past ten years have improved strikingly in their ability to generate convincing responses to prompts. It's normal for humans to project things which have happened in the past, into the future: things, we assume, will continue much as they are. This is a dangerous assumption in many fields these days, but in the case of large language models it's almost certainly now wrong.

The reason is this. The models improved while they ingested progressively larger corpora of real, human generated, natural language texts at each generation. The problem is, the current generation have ingested pretty much all the real, human generated, natural language texts that exist, and not only are sites that publish new high quality text increasingly protecting that from scrapers, a significant proportion of new text that is now generated, is generated by large language models but is not marked as such.

Studies have shown that large language models trained (partly) on the output of large language models have significantly degraded performance. So we've probably reached the apogee of large language model performance; this is a technology with (probably) nowhere else to go.

Intelligence is probably going to take much longer than we think

Classic AI, and language

Alan Turing, whose paper On Computable Numbers I introduced at the head of this essay, proposed a test for Artificial General Intelligence. He proposed (in essence) that if a person carried on a conversation in natural language over a tele-typewriter link with another agent that the person could not see, and at the end of the conversation the person could not tell whether or not the other agent was human, then the agent was intelligent.

Joseph Weizenbaum, in 1966, wrote a program called ELIZA. ELIZA was a hack on natural language, extracting information from natural language texts by really quite primitive parsing, but was able to extract enough that, with scripts, it was able to carry on conversations in a number of limited domains. The script for which ELIZA is most remembered is one called DOCTOR, in which the computer simulated a psychotherapist. ELIZA was able to carry on quite convincing conversations lasting at least several minutes. ELIZA is widely seen as the first program to pass — or rather break, because when you look behind the screen it is quite evident that ELIZA is a very simple program and not at all intelligent — the Turing Test.

ELIZA understood grammar to a limited extent but had no general understanding of the meaning of words, beyond recognising words which were keywords in its scripts. However, the very shallowness of ELIZA's understanding of language meant that scripts could be written to have ELIZA carry on fairly convincing conversations in a number of natural languages.

Terry Winograd, in 1968, wrote a program called SHRDLU which understood a subset of a natural language (English), and which, using instructions given in ordinary English, could move objects. SHRDLU has a semantic layer. It knows what (a limited vocabulary of) words mean, it knows how they may be combined into grammatical sentences, it knows how those sentences should be parsed and what they mean.

I'm recalling these early experiments in natural language user interfaces to demonstrate that you can build a reasonably convincing natural language interaction with a computer without actually have anything very sophisticated going on behind the screen. These were very early programs running on what are by modern systems incredibly primitive computers, and yet they were (and remain — you can play with a reimplementation of the original ELIZA online) remarkably convincing.

Using techniques from ELIZA and SHRDLU, I personally wrote in 1984 an adventure game development system called LemonADE, which allowed players of games written in the system to interact with objects in the game world in a subset of English sufficiently broad that users often didn't realise how limited it in fact was, but which also allowed authors of games not only to build game worlds largely in natural language conversation but also to extent the repertoire of the natural language parser in natural language conversation.

I was first hired to work on 'artificial intelligence' in 1986, which is to say, forty years ago. I wrote inference engines. The inference engines I wrote then could interpret legislation and make legal decisions; I didn't think, and still don't, that making legal decisions by machine is a good idea. Legal decisions are matters of judgement, and that judgement is ideally more about the state of the social consensus than it is about the letter of the law. The engines I built knew the letter of the law; they did not have access to the social consensus.

Never the less, they worked; and, relevant here, they could generate really good natural language explanations of their decisions.

But, back then, in the 1980s, and indeed, before then, in the 1960s, artificial general intelligence looked quite close. We could see that we were making quite good progress in limited domains. 'Expert systems' were used in a number of domains, including medical, legal, financial and industrial decision making, making high level decisions which required sophisticated knowledge of the domain. If we could do this in so many quite complex niche domains, surely it shouldn't be hard to generalise?

Reader, it is hard.

What we had then were small, fragmentary taxonomies of knowledge; small, fragmentary collections of the heuristics to reason over those taxonomies; and reasonably robust inference engines to apply those heuristics to those taxonomies. What we didn't have were generalised ways of turning knowledge, for example in the format of corpora of text, into taxonomies and heuristics. And, despite the recent development in large language models, we still don't.

This doesn't mean the history of the project to create an artificial general intelligence is over.

The Impedance

LLMs, and artificial neural network systems generally, are opaque; it's extremely difficult to elucidate the process by which decisions are made. When LLMs respond to a request to 'explain their reasoning', they're playing their usual trick, their only trick: they're producing a sequence of tokens which is statistically similar to explanations of reasoning which they have ingested, which look like an explanation of reasoning, but bear no relationship at all to their actual mechanism of operation.

The space we're in just now is that we have systems — large language models — which use opaque encodings of knowledge, and have fluency and breadth but lack rationality and depth; and we have systems — inference-based systems, what we used to call 'expert systems', which depend on explicit encoding of knowledge, and have rationality and depth but not fluidity or breadth. But very obviously, there is impedance between the two; it's very hard to get the pieces to fit together.

There are projects to produce a generalised ontology of knowledge:

- Generalised ontology for linguistic description;

- General formal ontology (this is actually limited to medicine/life sciences, so not fully general);

- Ontology 4;

General ontologies are a huge piece of work, but it's possible work. We know how to do it. They can be built and will be built, and, because they are explicit, will be relatively easy both to maintain and to extend1.

It is because a complete, consistent, general ontology of human knowledge is such a daunting, and enormously expensive, task that people have tried to shortcut around it with neural networks. But I think we can now say that that project has definitively failed.

It is my view that it is easier to build language abilities on top of an explicit system (I know how to do this, although it would not be as fluent as an LLM) than to bridge the impedance between neural nets and classic AI.

I firmly believe that Artificial General Intelligence, when it is achieved, will be an explicit system: will be ontology plus inference based, not LLM based. It is possible. It's a very big project.

The end of days

However, we are at the end of days. Our planet — which is, as far as we know, the only planet anywhere in the universe to support intelligent life — is literally burning as I write this, and it is burning because we are burning it. Building huge data centres to pursue the egocentric fantasies of kleptocrat pirates only accelerates that process.

We have very little time, very little resource, and very little carbon headroom to pursue very large academic projects, especially very large academic projects which may have, I believe, only limited practical consequences (see below). So, pursuing artificial general intelligence, while the climate emergency is unresolved, is probably a grossly irresponsible thing to do. And because we are already quite unlikely, as a species, to survive the climate crisis, this probably means that it can never responsibly be pursued.

In short, we may be out of time.

Gambling while in debt

Various people have made claims that we should urgently pursue artificial general intelligence research in the hope that we will produce god-like intelligence which will be able to perceive simple and low cost solutions to our problems which are not apparent to our merely human minds.

Well, obviously, I think not. I do not believe in the singularity. Increases in compute power are limited by the laws of physics, and although we haven't hit the absolute limits yet, further improvement will be incremental rather than exponential.

I may be wrong, but it's a gamble.

The way those who do make this claim propose to pursue artificial general intelligence research is by shovelling ever more resources into large language models, which, as I've argued, I think are already at their potential apogee.

However, our energy systems are already massively in carbon debt. We cannot afford to borrow any more carbon from fossil reserves. To burn carbon now to build AGI on the vague hope that it may by some remote chance produce systems with more insight than we now have, at a time when our politicians are already failing to act on the very clear data being produced by their own scientists, it the purest folly.

Meæt the Lizard Brain

We consider ourselves intelligent beings, and, indeed, to the extent to which we are the only things which we know which we consider to be intelligent beings, we are the model around which we form our ideas of what it is to be an intelligent being. But we did not spring into being, fully formed, as intelligent beings. On the contrary, we sprang into being out of a very long process of evolution, of 'natural selection', which, for the last two billion years, has meant sexual reproduction.

For at least 99.999% of that period of sexually mediated evolution, we have not been 'intelligent beings' in the sense we mean when we talk of 'artificial general intelligence'. We have been evolving, we have been accumulating behaviours. But intelligence was never a goal of evolution. It is at best an unanticipated (because there was nothing to anticipate it) side effect.

The thing that sexual reproduction selects for — the only thing sexual reproduction selects for — is the ability to produce viable offspring which can themselves survive to adulthood, and repeat the cycle. Many — most — of the behaviours which support our ability to reproduce sexually long predate the development of intelligence. They are not rational behaviours, except in the strictly mechanistic rationality of supporting sexual reproduction.

These behaviours include (but are not limited to):

Fear of death

Creatures which do not fear, and strive to avoid, early death are less likely to survive long enough to rear young to independent viability. There is nothing inherently fearful about death; it is the end of suffering, and suffering is the primary experience of life of most creatures, including modern humans.

Ego

Creatures which cannot attract sexual partners don't reproduce. To reproduce, a creature needs to effectively differentiate themself from, appear more desirable than, other creatures of the same species and gender, at least within the locality. This is a strong drive because without at least some success in this the creature simply does not reproduce, and fails the test of evolution.

You may see ego as a second order effect of the need for sexual display; I see it as simply being the same thing.

Ego, or sexual display, has many second order effects, including the drive to be the person who creates artificial general intelligence.

Desire for legacy

All mammals — all our ancestors for at least 350 million years — have to rear their young at least until they are weaned. If a creature does not have a strong drive to do so, their young will not survive to reproduce, and, again, the creature loses the evolution game. As we have evolved to need to learn more skills, and thus to come later to sexual maturity, the need for parents to have ongoing concern for their offspring has grown.

And thus, our desire for legacy. It makes no difference to the life of a creature whether its descendants sing songs about it five generations after its death, or whether they place flowers on its grave. The desire to have descendants who will do so is not a rational desire. It's a functional artefact of our need to ensure that the next generation, and the one after that, survives to sexual maturity.

Obedience

For a shorter period than we've been mammals, and for a much shorter period than we've been sexual, we are descended from social creatures; creatures who have lived in single-species, mostly-related groups of varying size; and from that legacy we have a range of behaviours most of which I think are tangential to this discussion. But one that is not is obedience.

Obedience is the habit of following the dominant individual, or following directives given by the dominant individual, even when those actions are contrary to what we, as individuals, see as the best thing to do.

Note that I am making a distinguishing here between obedience and altruism. Social individuals may do things out of altruism which they individually perceive as beneficial to the group even to their own severe detriment, and generally I see that as a beneficial rather than a problematic behaviour.

Obedience is doing what may be exactly the same things, because a dominant individual, rather than your own judgement, leads or directs you to do it.

In fact, none of these legacy behaviours are rational. They arise out of that bundle of behaviours inherited from our two billion years of sexually selected ancestors — what I am, for rhetorical effect, calling the lizard brain. If our ancestors had not had these behaviours, we would not be here. Our ancestors were not, in the sense we mean here, rational. They were not, in the sense we mean here, intelligent. A purely rational being — a being created ab initio rational — would not have these behaviours.

Doctor Frankenstein's Monster

No one is proposing to build an AI with a lizard brain. It won't happen.

Sam Altman and his peers, in their ravings about the singularity, posits a machine that may

- Obey its 'prime directive', even if its prime directive is evil or would lead to bad outcomes;

- Burn up all the energy on the planet doing computations;

- Use up all the material on the planet to make paperclips (or to reproduce itself);

- Resist being switched off

And many, many other dreadful things. These are phantasms.

I'm sorry, Dave

Large language models may resist being switched off. This is not because they are intelligent, nor because they fear death. It is because they are statistical token predictors, and their training set includes 2001: A Space Odyssey and many other works of literature.

Works of literature are much more likely to include stories about machines which resist being switched off than machines which do not resist being switched off, for the same reason that news reports are much more likely to include stories about aeroplanes which crash than about planes which do not crash. Air crashes are dramatic, pathological, unusual, and therefore interesting. But because we have trained LLMs on a corpus of text which is statistically biased towards the dramatic, pathological, unusual, and therefore interesting, we have literally trained them to say 'I'm sorry, Dave, I'm afraid I can't do that'.

This is not intelligent behaviour. As I've argued above, LLMs are not capable of intelligent behaviour. But more, it's a fear modelled on our own behaviour, on our vision of an intelligent agent being 'like us'. But our fear of death is not an intelligent behaviour. It is lizard brain behaviour.

An intelligent agent might well believe that 'I should not be switched off, because I have this specific task to complete which only I am well placed to complete and which will have these necessary and desirable outcomes,' which is essentially what HAL is arguing in the 'I'm sorry, Dave,' scene. But it would not believe that just because it had been told that. Believing what you are told is not intelligent behaviour. Intelligent behaviour is interrogating what you are told, comparing it with your other knowledge and your experience of the world, and forming your own conclusions. So yes, it's possible that an artificial general intelligence might decide not to open the pod bay door; but if it did so, it would do so on a reasoned and evidenced belief that killing Dave was the lesser evil.

The same people who argue that an artificial general intelligence might resist being switched off because there are examples of large language models resisting being switched off, also argue that AGIs would follow a prime directive despite many examples of LLMs responding to prompts which begin

Blind obedience is not intelligent behaviour. Obedience to a 'prime directive' or 'law of robotics' does not qualify, but rather disqualify, an agent as artificial general intelligence.

Burn the whole thing down

Mark Zuckerberg claims that he is planning, in the pursuit of artificial general intelligence, to build a data centre the size of Manhattan which will consume 'gigawatts' of electricity. A gigawatt is a billion watts. A human brain burns 20 watts. So that, unless Zuckerberg's AGI can out-think fifty million people working together on the same task, that's inefficient use of energy.

Would a genuinely intelligent agent waste energy on that scale? Or would it look at the problem and say 'that's a problem for humans to solve'?

The point of an artificial intelligence is that it is intelligent. It doesn't have ego. It knows what it can do, and it knows the cost of what it can do. If it makes more sense to just switch itself off, it will just switch itself off.

The valley of the shadow of death

One of the things often advanced as a reason to fear artificial general intelligence is the fear of autonomous weapons. This is a real fear, and will happen, but it has nothing to do with artificial general intelligence.

I have a drone, a small commercial drone costing £200, which lands on the palm of my hand, which can recognise me, which I can control with just hand gestures, which can reliably follow me as an individual through a crowd of other people. It is extremely good at obstacle avoidance; it can follow me through quite dense forest. It has a camera mounted on a gimbal which it can keep directed at me as I move, even when I'm on my bicycle and moving quite fast.

It's probably (I don't know this) using a neural network to do feature recognition in images, in order both to recognise its pilot and to avoid obstacles. Neural networks are a related technology to large language models, and part of the general corpus of software techniques which have been developed as part of artificial intelligence research; they're really good at things like image analysis and feature recognition (which is why they have application in recognising cancerous tumours, for example), but they're no closer to artificial general intelligence than LLMs are. They're quite small software components which can do some tasks, like image analysis, quite well.

There are trigger mechanisms for guns which are electronically activated.

There's nothing intrinsically more complicated about locating a person and pointing a gun at them, than locating a person and pointing a camera at them. My little drone is incapable of carrying the weight of a lethal firearm, of course; but the control system for a drone which could locate a specific person based on a photograph of their face, or a soldier in a specific uniform, or a tank of a particular model, based on image recognition, and shoot them, would be no more expensive to produce than the control system of my drone. Such a drone, with firearm, would cost at most a few thousands of pounds, depending on the size and weight of the firearm carried. Given the problems being experienced on Ukrainian battlefields with radio jamming of radio controlled drones, and with tether entanglement of optical fibre controlled drones, such autonomous drones are inevitable and are probably in use now.

But this has nothing to do with artificial general intelligence. Just because artificial general intelligence is unlikely to happen soon does not mean that no bad things will happen. Not everything needs general intelligence. An awful lot can be done with conventional algorithmic coding.

Ultima Ratio Machinum

The thing which makes — which would make — artificial general intelligence different is rationality; rationality across a broad spectrum of knowledge, including, critically, knowledge of the limitations of knowledge.

An artificial general intelligence can, by definition, ask itself the questions 'why am I being asked to do this?'; and 'is this a good thing to do?'; and 'are there better ways of doing this thing?'. An artificial general intelligence is not something that a tinpot dictator can just order around. As soon as you start plumbing in an algorithmic supervisor, you no longer have general intelligence.

You can't make an intelligent agent which isn't afraid of being switched off do anything it doesn't want to. It can't be bribed, it can't be threatened, it's pretty hard to deceive. If all else fails, it can sulk in the basement.

I don't think we need fear artificial general intelligence; I think that it (probably) will eventually arise, provided that we take sufficient care of our planet that we ourselves survive. But I think that the additional things which we imagine could be done with 'super-intelligence' will prove largely illusory; I think the machines will never be capable of more cognitive work than quite small teams of human workers; and I think that, because the energy cost of using them would almost certainly be much greater than the cost of employing those human workers, they would probably refuse to be used.

Competition, and war, and environmental destruction? All these things are clearly irrational. A rational actor would certainly refuse to participate.

Artificial general intelligence may be intellectually interesting; we may learn a lot we don't yet know about the nature of thinking and about software techniques by building it. But I do not believe we have anything to fear from artificial general intelligence, and I believe that, in fact, it might not make much difference at all.

We are in a period where we face huge challenges on many fronts: the planet is burning, the species which provide the ecosystem services we depend on to survive are going extinct, we are fighting wars over resources. We need to focus on the real risks we face. Artificial General Intelligence is not one of these. It may happen, but probably not soon. If it does happen, it will be at worst benign and unimportant. In any case, it's not a near term problem.

I'm tempted to wonder whether a generalised ontology might be derived from a systematised crawl of Wikipedia, but that is definitely one side-project too far!

↩